There is a gap in AI app builders that the marketing does not mention.

It is not a feature gap. The tools do what they say they do. It is a language gap. The gap between the prompts that produce good outputs and the prompts that produce frustrating ones is not technical knowledge. It is the ability to describe, specifically, what you want to build before you start building it.

That sounds simple. It is not.

What the gap actually is

At the beginner end: "build me an app that helps my coaching clients track their goals." The AI builds something. It makes dozens of decisions without being told what to decide. The output is functional but generic. It does not match what was in the person's head because what was in the person's head was never made explicit.

At the experienced end: "build a single-page web app where a coaching client can log three goals at the start of a week, mark each one done or not done at the end of the week, and see a simple four-week streak view. No login required. Data stored locally. Mobile responsive. Tone should feel encouraging, not clinical."

Same basic idea. Completely different outputs. The difference is not technical knowledge. It is structured thinking about what the thing needs to do, for whom, with what constraints.

This is the hidden language gap. And it shows up not just in what the AI builds, but in how long it takes to get there, how many iterations are needed, and how much money is spent in the process.

Why the gap is hidden

Because the tools do not show you what you are missing.

When you prompt an AI builder with something vague, it does not say "I need more information." It generates something. It looks reasonable. You iterate. You feel like you are making progress. You are actually in a refinement loop on a brief that was never clear enough to produce what you wanted.

The gap stays hidden because the tool keeps producing output. The cost of that hidden gap shows up in time, in tokens, in money spent on iterations that were never going to land where you wanted because the target was not defined.

Where the gap shows up most

Coaches and service providers who know exactly what they want their tool to do but have never had to describe it in structured terms feel this gap the most.

A health coach who knows, from experience, what her clients need in a goal-tracking tool: the exact inputs, the motivational framing, the weekly rhythm that works. She has never needed to write a technical specification for it. She does not know the vocabulary. She knows the thing she wants.

Translating that knowledge into a prompt that produces the right output is the skill the gap requires. And it is a skill. Learnable, practical, and nothing to do with being technical.

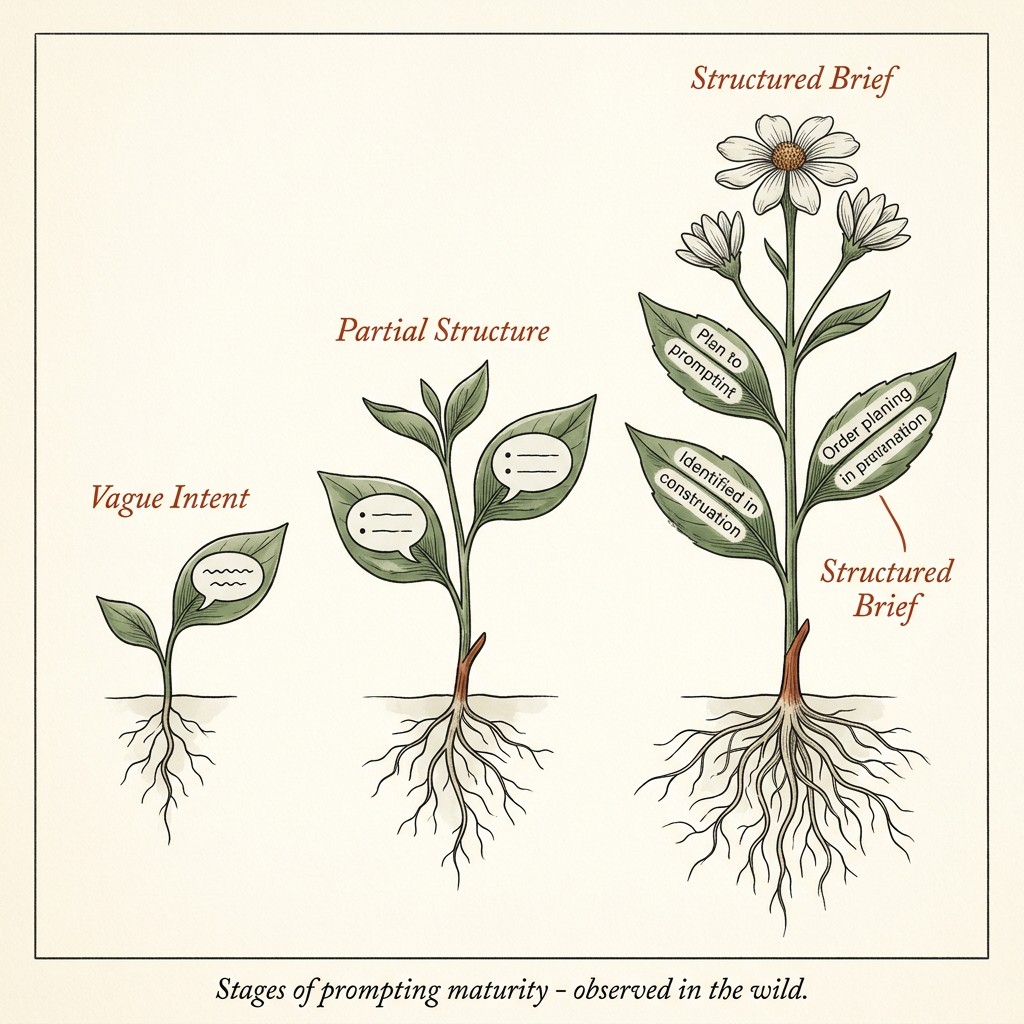

The staircase from vague to structured

"help my clients track goals."

"a simple tool for coaching clients to set three goals each week and check them off."

"a one-page web app for coaching clients to set three goals at the start of each week, mark them complete or incomplete on Friday, and see how their completion rate has changed over the last four weeks. Mobile-first. No login. Stored locally. Warm, non-clinical tone."

Each step adds: specificity about what the tool does, who it is for, how it works, what the constraints are, and what the tone should feel like. None of those additions require technical knowledge. They require knowing your own business well enough to say what you actually need.

What to do before you open a builder

Write the brief before you open the tool. Include:

Who this is for

One specific person with one specific situation.

What it needs to do

The core function in one or two sentences. Not aspirationally. Functionally.

What it should not do

Constraints are as important as requirements. "No login required," "no more than five inputs," "single page only" all reduce the decision space and produce tighter outputs.

What the tone or feel should be

This sounds soft but it is not. "Warm and conversational" versus "clean and clinical" produces meaningfully different interfaces.

One example or reference

"Something like [this] but for [my specific audience]" gives the AI an anchor.

That brief, even rough, even handwritten, will do more for the quality of what the AI builds than any technical knowledge.

FAQ

What makes a prompt "structured" versus vague?

Specificity about outcome, audience, constraints, and tone. A structured prompt answers "what does it do, for whom, what are the rules, and what should it feel like" before asking the AI to build anything.

Do I need to learn technical terms to improve my prompts?

No. Specificity about your own business is more valuable than technical vocabulary. "My clients are busy parents who check in once a week" is more useful than knowing what a REST API is.

Why does vague prompting feel productive even when it is not?

Because the AI always produces something. The output looks like progress. The gap only becomes visible when you realize, after many iterations, that you still do not have what you wanted.

How many iterations should it take to get a good output?

For a simple tool with a clear brief: two or three rounds. If you are past five and still not close, the brief needs work, not more iterations.

Is this something AI builders will fix over time?

Partly. Better tools ask better questions before generating. But the person building still needs to know what they want. That part is not something a tool can do for you.

Thank you for reading. There is more on the blog whenever you are ready. And if you want to work through this alongside other coaches and creators, come and join us inside the community.