The pitch for AI tools aimed at non-technical users is always some version of the same thing: just describe what you want in plain English and it builds it for you. No code. No training. Just talk to it like a person.

That pitch is half true.

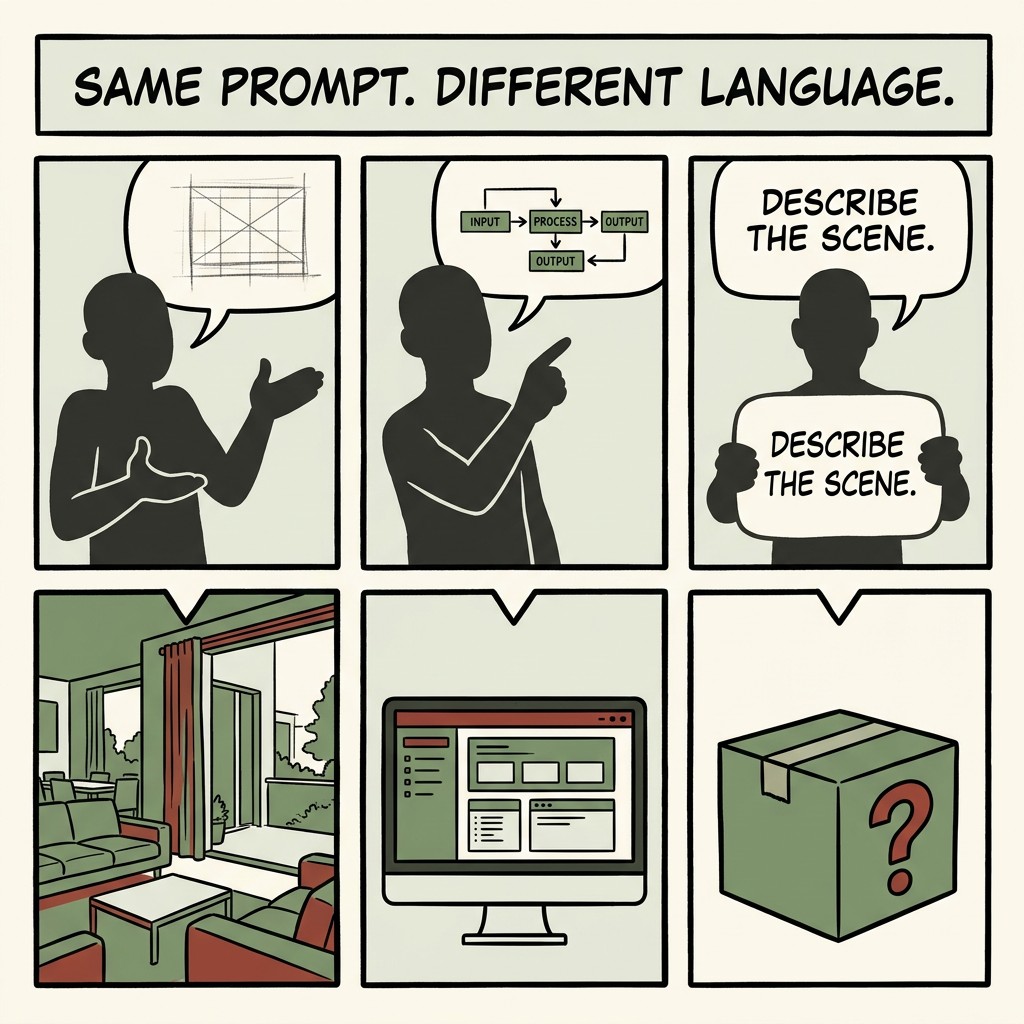

You can describe what you want in plain English. But "plain English" covers an enormous range of specificity, context, and structure. The gap between what a senior developer means when they say "build me a simple dashboard" and what a first-time user means when they say the same thing is significant. The AI tool receives both as natural language. What it produces is very different.

Natural language is not a universal input. It is a starting point. And how far it gets you depends heavily on what you already know how to say.

The three users saying the same thing

Consider three people asking an AI builder to create a client intake form for their coaching business.

The first is a designer. She describes the intake form in terms of layout, flow, and visual hierarchy. She specifies the field order, mentions that she wants a progress indicator, notes the form should feel conversational rather than clinical. The AI receives a clear visual and structural brief. The output is close to what she had in mind.

The second is someone with a technical background. He describes the form in terms of data fields, validation logic, and where the data should go after submission. He mentions a webhook. He specifies required versus optional fields. The AI receives a clear functional brief. The output is close to what he had in mind.

The third is a coach who has never built a form before. She says: "I need an intake form for new clients so I can understand what they need before our first call." She knows exactly what she wants the form to do. She cannot describe it in the language that produces a clear brief. The AI makes assumptions. The output is generic. She iterates. She is not sure whether the next version is better or just different.

All three used natural language. All three got different results. Not because the tool is unfair, but because the tool responds to the specificity and structure of the input it receives.

Why this matters for coaches and creators

The people most likely to feel the gap between the promise and the reality of AI tools are the people who are excellent at their work but have not previously needed to translate that expertise into technical or structural language.

A coach knows exactly what a good intake form achieves. She knows what questions reveal the most about a client's situation. She knows what she needs to understand before a first call. That is genuine expertise. The AI cannot access it unless she can describe it specifically.

This is not a failure of intelligence or technical ability. It is a vocabulary gap. The people who get the best results from AI tools are the ones who have learned a second language: the language of structured prompting. It is learnable. But it is not automatic, and it is not what "just describe what you want" implies.

What structured prompting actually involves

Outcome before format

Instead of "create a form," say "I need to collect the following information from a new coaching client before our first session: [list the actual things you need to know]." The AI can work with a list of requirements. It cannot read your mind.

Context about the user

"My clients are women in their 40s who are returning to work after a career break" produces different output than "my clients." The more specific the audience, the more the tool can calibrate the tone, language, and complexity.

Constraints

"Under ten questions, conversational tone, no clinical language" is a constraint set. Constraints reduce the space of possible outputs and make it easier for the AI to land in the right area.

Examples

"Something like [this type of form] but for [this specific purpose]" gives the AI a reference point. You do not need to use technical vocabulary if you can gesture toward an example.

The solution is not to know more jargon

The solution is to know more about your own work and be able to say it specifically.

A coach who can describe, in plain terms, what she learns from a good intake form, what her clients struggle to articulate, and what she needs to understand before a first call, will get better outputs than someone who uses technical vocabulary but does not know what they actually want.

The vocabulary of structured prompting is partly technical and partly just specific. Specificity is the bigger lever. And specificity about your own expertise is something you already have. The work is learning to surface it in the brief.

FAQ

Why do different people get such different results from the same AI tool?

Because the tool responds to the specificity and structure of the input. Detailed, contextual prompts produce better outputs than vague ones, regardless of how "natural" the language sounds.

Do I need to learn technical language to use AI tools effectively?

No. You need to be specific about your own expertise: who it is for, what it needs to do, what the constraints are. Technical vocabulary helps in some contexts but specificity is the bigger factor.

Is this a problem with the AI tools?

Partly. Tools that promise "just describe what you want" set an expectation they cannot fully meet. The gap between that promise and the reality is real. It is also closeable with practice.

How do I get better at prompting without taking a course?

Start by writing what you want before you open the tool. Who is this for, what does it need to do, what should it not include. That brief, even rough, will produce better outputs than jumping straight to a prompt.

Thank you for reading. There is more on the blog whenever you are ready. And if you want to work through this alongside other coaches and creators, come and join us inside the community.